论文:https://arxiv.org/pdf/2407.17490 https://github.com/YuxiangChai/AMEX-codebase/tree/main/data_utils https://huggingface.co/datasets/Yuxiang007/AMEX

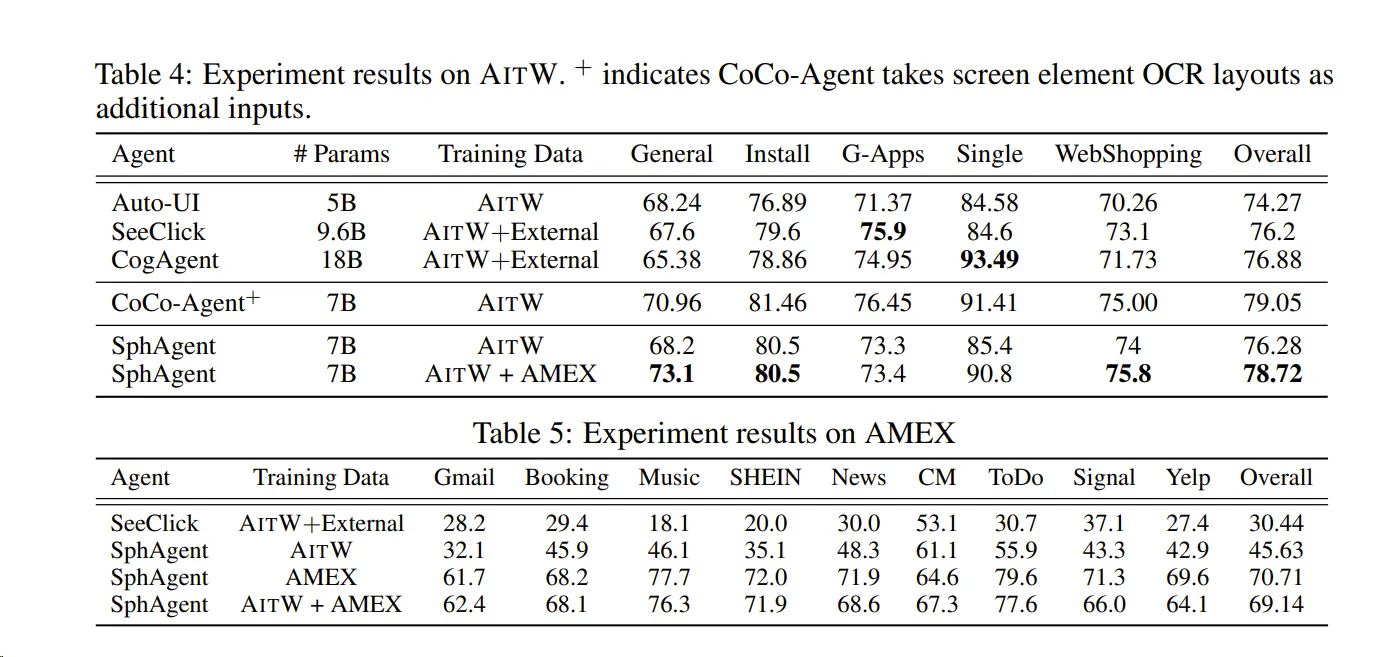

AMEX数据集包括三个层次的注释:

- GUI交互元素定位 :

- 分类两类交互元素:可点击元素和可滚动元素。

- GUI屏幕和元素功能描述 :

- 使用GPT生成屏幕和元素的功能描述,并进行人工检查以确保准确性。

- 复杂自然语言指令与GUI动作链 :

- 每条指令平均包含12.8个步骤的动作链,显著高于现有数据集。

在 Ubuntu 22.04 上,我们经常需要查看当前的网络带宽占用情况,尤其是下载大文件时,了解实时的下载速度可以帮助我们判断网络状况或管理下载任务。本文将介绍几种简单有效的方法来监控 Ubuntu 22.04 的下载带宽,包括终端工具和图形界面方案。

为什么极点在左半平面(LHP)系统就会稳定?

在控制系统中,极点的位置决定了系统的动态响应和稳定性。具体原因如下:

-

时域响应分析: • 对于连续时间系统,传递函数的极点 对应的时域模态为 。

• 若极点 在左半平面(LHP)(即 ),则 会指数衰减,系统最终趋于稳定。

• 若极点 在右半平面(RHP)(即 ),则 会指数发散,系统不稳定。

• 若极点在虚轴上(),则系统处于临界稳定(如持续振荡)。

-

稳定性判据: • BIBO稳定性(有界输入有界输出):所有极点必须在左半平面。

• Lyapunov稳定性:对于线性系统,LHP极点等价于渐近稳定。

LQR控制原理与倒立摆系统实践指南

一、问题背景:惯性轮倒立摆控制 我们面对的是一个典型的欠驱动系统——惯性轮倒立摆(Inertia Wheel Pendulum)。系统通过控制惯性轮电机产生反扭矩来维持摆杆竖直平衡。系统参数如下:

配置

构建新的镜像:

展开代码docker build --network=host --build-arg http_proxy=http://10.136.19.26:10828 --build-arg https_proxy=http://10.136.19.26:10828 -f Dockerfile -t kevinchina/deeplearning:vlmr1-0501 . # 进容器装环境: apt-get update apt-get install libibverbs1 pip config set global.index-url https://mirrors.tuna.tsinghua.edu.cn/pypi/web/simple pip install babel python-Levenshtein matplotlib pycocotools timm==1.0.15 # Addtional modules pip install wandb==0.18.3 pip install tensorboardx pip install qwen_vl_utils torchvision pip install flash-attn --no-build-isolation pip install babel pip install python-Levenshtein pip install matplotlib pip install pycocotools pip install openai pip install httpx[socks] pip install json_repair

展开代码docker commit 4411ba9deb19 kevinchina/deeplearning:vlmr1-0501-1

在MATLAB中,将状态空间模型转换为传递函数可以通过以下步骤完成:

方法一:使用 ss 和 tf 函数

- 定义状态空间矩阵:

matlab展开代码

A = [...]; % 状态矩阵 B = [...]; % 输入矩阵 C = [...]; % 输出矩阵 D = [...]; % 直接传输矩阵 - 创建状态空间对象:

matlab展开代码

sys_ss = ss(A, B, C, D); - 转换为传递函数:

matlab展开代码

sys_tf = tf(sys_ss); - (可选)约简传递函数(消除共同零极点):

matlab展开代码

sys_tf = minreal(sys_tf);

状态空间方程与传递函数的关系详解

在控制系统中,状态空间方程和传递函数是两种常用的数学模型,它们分别代表了现代控制理论和经典控制理论的核心工具。本文将深入探讨它们之间的内在联系与相互转换方法,并通过实例解析帮助读者更好地理解这两种模型的关系。

状态空间方程(State-Space Equation)是现代控制理论中描述动态系统的核心数学模型,它将系统的输入、输出和内部状态变量通过矩阵形式关联起来。以下是详细解释:

在带有惯性轮的倒立摆系统中,使用拉格朗日方程进行分析时,确定摆杆角度和惯性轮角度的方程右边的步骤如下:

- 系统建模与广义坐标 • 摆杆角度:定义为摆杆与垂直方向的夹角,记为 。

• 惯性轮角度:定义为惯性轮相对于摆杆的转角,记为 。惯性轮的绝对转角为 。

本地模型目录需要是git仓库:

- 初始化 Git 仓库(如果尚未初始化) 如果目录没有初始化过 Git,需要先执行:

bash展开代码git init

- 添加 Hugging Face 远程仓库

bash展开代码git remote add origin https://huggingface.co/hugxd/InternVL2_8B_Point_to_Box